Conv2d

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

| import torch.nn as nn

from PIL import Image

from torchvision import transforms as T

img_path = [path to image]

img = Image.open(img_path)

transform = T.ToTensor()

img_tensor = transform(img).unsqueeze(0)

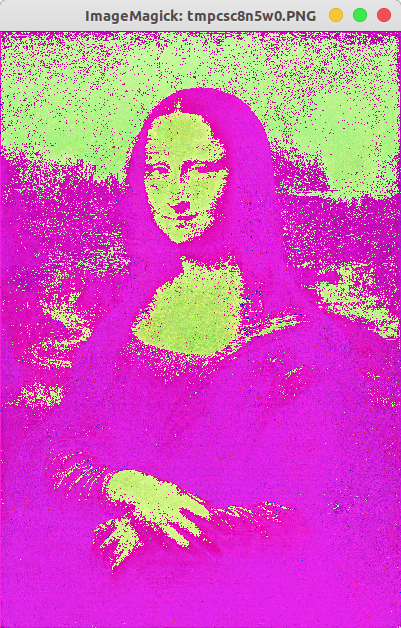

net = nn.Conv2d(3, 3, 3, stride=2)

out = net(img_tensor)

to_pil = T.ToPILImage()

print(out.shape)

out = out.squeeze(0)

print(out.shape)

img_pil = to_pil(out)

img_pil.show()

|

nn.Conv2d(3, 4, 3, stride=2)

nn.Conv2d(3, 3, 3, stride=2)

nn.Conv2d(3, 3, 3, stride=3)

3

3

nn.Conv2d(3, 3, 3, stride=3, padding=2)

MaxPool2d

padding < kernel size / 2

pad should be smaller than half of kernel size

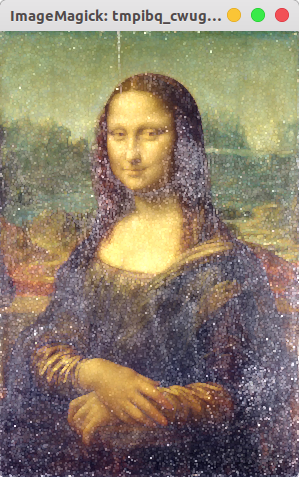

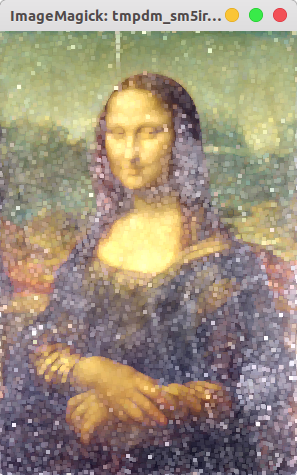

nn.MaxPool2d(kernel size=8, stride=4, padding=1)

nn.MaxPool2d(kernel size=16, stride=4, padding=1)

nn.MaxPool2d(kernel size=32, stride=4, padding=1)

AvgPool2d

pad should be smaller than half of kernel size

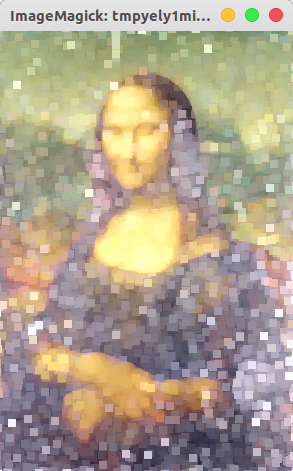

nn.AvgPool2d(kernel size=8, stride=4, padding=1)

nn.AvgPool2d(kernel size=16, stride=4, padding=1)

nn.AvgPool2d(kernel size=32, stride=4, padding=1)